Election Surveys: Beyond the Numbers and the “Horse Race”

SURVEY RESULTS on voter preferences are a staple of election reports, gaining wider public interest as the election nears. CMFR’s election monitors over the years have found that these occupy prominent space on the front pages in newspapers and consume much airtime in television newscasts.

Pulse Asia released its February 2016 nationwide survey on the May 2016 elections on March 4, which found Senator Grace Poe leading the pack of presidential candidates. Social Weather Stations (SWS) followed shortly, releasing on March 14 the survey results commissioned by BusinessWorld for the period March 4 to 7. But while media reports focused on the standing of contenders for national posts in the surveys, these were limited to tracking who were gaining or leading, without much effort to explain the significance of the numbers and rankings.

CMFR reviewed the coverage of the Manila newspapers (Philippine Daily Inquirer, The Philippine STAR, Manila Bulletin, The Manila Times, The Standard, The Daily Tribune, BusinessWorld, Business Mirror, and Malaya Business Insight) as well as the primetime TV newscasts (ABS-CBN 2’s TV Patrol, GMA 7’s 24 Oras, TV5’s Aksyon and CNN Philippines’ Network News) when the results of their respective surveys were released by Pulse Asia and SWS on March 4 and 14, respectively.

Prominence in Print

CMFR noted that during the 2004 national elections, the majority of survey reports appeared on the front page (“Surveys in the news: Front page treatment, mostly” (Philippine Journalism Review, April-July 2004). Surveys have not moved from this place of prominence.

The results of the February Pulse Asia survey released on March 4 generated a total of 10 reports in print, five of which were banner stories, with the other five placed also on the front page. The SWS March 4 to 7 survey results, which it released on March 14, were the subject of eight reports—five on the front pages, of which two were banner stories, while three were in the inside pages.

The primetime TV newscasts produced fewer reports, with a total of seven stories on March 4 and only four on March 14.

In reporting survey results, among the fundamentals that need to be explained to readers and viewers are the meanings of terms commonly used by the survey organizations. But most reports only repeat what the actual surveys say, without explanation.

Most commonly used terms left unexplained are “statistically tied/statistical tie,” “margin of error/error margin,” “confidence level,” “mean,” and “median.” The primetime newscasts frequently use the terms “margin of error” and “statistical tie” also without explanation.

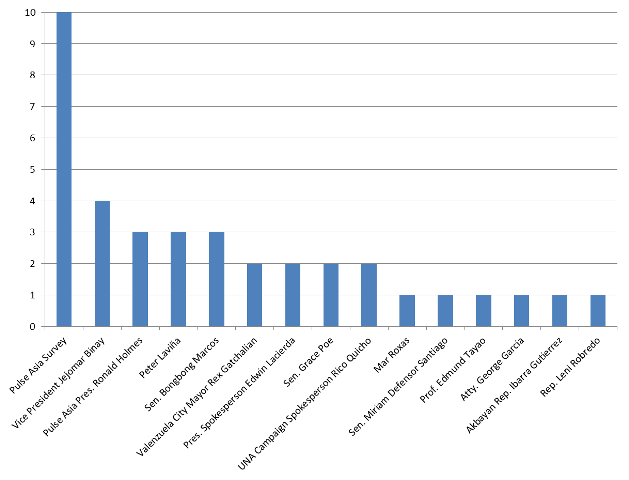

Their sources were also limited to the survey group and the survey results which they cited, and the candidates themselves or their spokespersons (see Figure 1 and 2).

Explaining the Methodology

Reports explaining the survey methodology were scant and usually limited to information that the results were based on “face to face interviews with 1,800 registered voters nationwide,” as in the case of the Pulse Asia survey, with an exception. 24 Oras’ Vicky Morales explained how the polling firm conducted the survey as she read the results on air on March 4. When the SWS survey came out, it was also the same primetime newscast that compared its results with previous findings.

Sources

The survey itself was the primary source cited in all the news reports published. The spokespersons and other staff of the presidential candidates were second. Because there were limited efforts in seeking other insights, the reports failed to make the numbers more meaningful.

Even the reports on the statements of the spokespersons and other staff of the candidates or the candidates themselves were usually limited to quoting expressions of either elation over the results, or skepticism about the accuracy of the surveys. In reporting the Pulse Asia survey, it was only the Inquirer that sought the insights of a political science professor on the gains of particular candidates and used Pulse Asia president Roland Holmes as a source.

Figure 1. Sources cited in print reports on the Pulse Asia and SWS surveys.

On the Pulse Asia survey released on March 4.

On the SWS survey released on March 14.

A similar trend was evident in the reports on television (see Figure 2). Reports aired the side of the contenders, with only a few approaching other experts to provide additional insights.

Figure 2. Sources cited on primetime reports on the Pulse Asia and SWS survey.

On the Pulse Asia survey released on March 4.

On the SWS survey released on March 14.

Despite the nature of reporting on surveys, some media organizations produced outputs worth mentioning. On Feb. 18, Rappler posted “Confused by surveys: what do these terms mean?” explaining the meaning of the terms usually used in the survey results (“Simplifying Survey terms,” CMFR, Mar. 7).

In October 2015, the Inquirer published “‘Answers are part of the question’” by former SWS president Mahar Mangahas, who wrote in his column that “Ideally, the pre-election surveys should also include probes into the voters’ awareness of, and reactions to, the said events, statements and actions. This will enable analysts to do statistical correlations of the said factors to the voters’ preferences among the potential candidates.”

Leave a Reply